Presentation at Embedded World 2023, Nuremberg, Germany

5G Radio addresses machine-type communication with low energy demands. 5G Edge Clouds reduce processing latency by bringing extra compute close to the “edge”. To be cost efficient, Edge Cloud systems are implemented following modern datacenter technology, such as NVMe. NVMe uses PCIe. PCIe typically is implemented for short-range up to 30 cm but for composability, there is so-called NVMe-over-Fabric built on top of PCIe Long-Range Tunnel.

With 3GPP Release 15 5G Radio starts meeting the requirements for implementing such PCIe Long-Range Tunnel, enabling the design of composable Edge Cloud Storage over 5G.

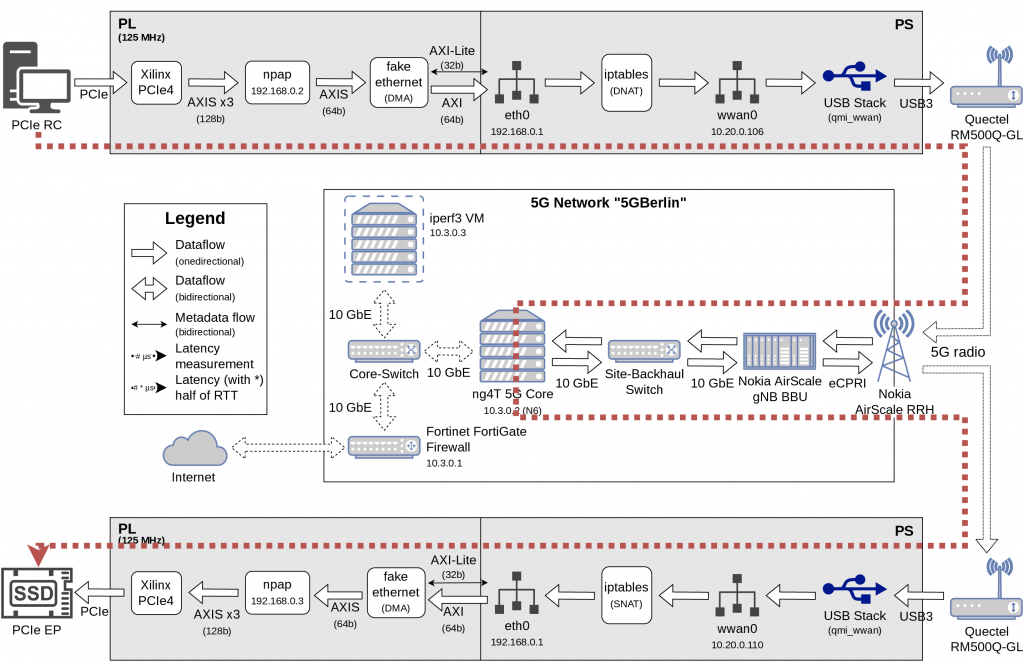

Our presentation at Embedded World 2023 demonstrates a proof-of-concept “NVMe-over-5G” implementation along with a methodology for detailed latency analysis. We start with key aspects of 5G URLLC, PCIe and Long-Range PCIe, followed by introducing NVMe and composable Datacenter architectures. We then present our setup NVMe-over-5G running on the experimental 5G system at Fraunhofer and TU Berlin. We use COTS hardware comprising AMD/Xilinx Ultrascale+ MPSoC and 5G modems to connect a standard NVMe SSD with a standard CPU running Linux and the NVMe protocol. We discuss latency numbers, i.e. how much each intermediate component contributes to the total PCIe NVMe end-to-end latency. Our findings show that in general 5G URLLC can meet the PCIe/NVMe requirements, as long as certain optimizations are implemented, to reduce tail-end latency.